Develop your technical literacy

and creative design skills

For more information, visit:

www.mat.ucsb.edu/mad

Develop your technical literacy

and creative design skills

For more information, visit:

www.mat.ucsb.edu/mad

Abstract

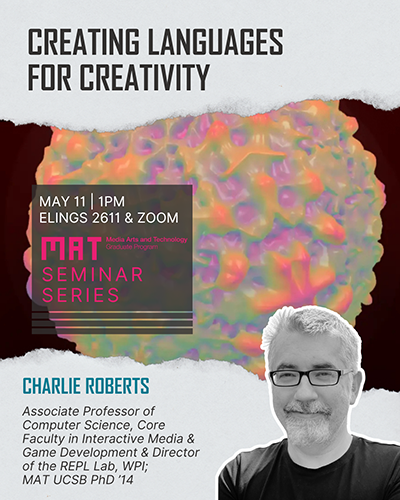

Developing programming languages can come with mystique for artists unfamiliar with the practice. But creating your own language opens up different expressive possibilities than relying on languages designed with different motivations and aesthetic concerns. In this talk I will discuss tools developed in my lab and in collaboration with other researchers to help demystify this process and make it more accessible to creative coders. I will then show two new languages for live audiovisual performance that I developed using similar concepts and tools. The first is screamer, a graphical language for creating generative visuals in the style of the demoscene computer art subculture. The second is mutter, a musical language for exploring temporal recursions and granular synthesis.

Bio

Charlie Roberts is an Associate Professor of Computer Science at Worcester Polytechnic Institute, where I am also core faculty in the Interactive Media & Game Development program. At WPI I lead the REPL (Realtime Expressive Programming Lab), researching programming languages, environments, and libraries in digital arts practice. Educators have used these systems to teach students computational media at dozens of universities, and I've given live coding performances with them across North America, Europe, and Asia.

For more information about the MAT Seminar Series, go to:

seminar.mat.ucsb.edu.

Abstract

This project develops open-source tooling for interacting with industry standard spatial audio. Music, film, and television streaming platforms have adopted object based spatial audio support. Dolby Atmos, the most prominent contemporary format, is tied to proprietary software for authoring, rendering, and production.

This thesis project provides the Cult DSP Spatial Toolchain for the flexible playback and transcoding of industry standard spatial audio outside of proprietary infrastructure. Primary contributions include Spatial Root, a layout-agnostic spatial audio playback engine, CULT (Coordinates and Universal Localization Transcoding) Transcoder, for translating and packaging scene metadata, and LUSID (Lightweight Universal Spatial Interaction Data), a readable, intermediate scene metadata format. The paper discusses the development and usage of each module, as well as Spatial Seed, a procedural up-mixing experiment built using the toolchain’s components - exploring repurposed object-based material for interactive media and authoring contexts. The toolchain’s development argues that interoperable open software infrastructure can make industry-standard spatial audio more portable across research, development, and nonstandard playback systems, while establishing an architectural framework that can inform future work with adjacent formats beyond ADM.

Abstract

This project investigates how to achieve and perceptually evaluate the benefits of extending head-tracked binaural audio from three-degrees-of-freedom (3DoF) to six-degrees-of-freedom (6DoF) for digital piano players. Building on previous 3DoF research that tracked only rotational head movements, this study evaluates a 6DoF system that captures both rotational and translational movements during digital piano performance. A real-time HRTF-based binaural rendering system was developed using a custom plugin integrated with face-tracking technology in a Max/MSP–DAW environment to process 6DoF movement data alongside MIDI input from the digital piano. Perceptual evaluation comparing 3DoF and 6DoF tracking modes across five attributes: Dynamic Spatial Realism, Spatial Clarity, Source Stability, Envelopment, and Preference demonstrated significant improvements for 6DoF tracking in four of five attributes. These findings validate the importance of capturing pianists' natural translational head movements and demonstrate that 6DoF head-tracked binaural audio offers enhanced realism and immersion that more closely approximates the acoustic piano experience.

Çağlarcan described his winning piece "Shadows" as an audiovisual transdisciplinary artwork that explores spiritual and social connections as his music overlays a selection of oil paintings by his brother, Güneş Çağlarcan, an accomplished painter and pianist.

For more information please read the article in the UCSB Current online magazine.

The project, Embodied Ink, was showcased at MAT's End of Year Show this past Spring.

Read the full paper here:

dl.acm.org/doi/10.1145/3746027.3756139

Video Presentation:

www.youtube.com/watch?v=08egiTo7yto

The fellowship allows Croskey to pursue a project that she is passionate about - enabling marginalized communities to secure their place in the future historical record, ensuring that emergent technologies, such as AI, elevate and empower these groups by reflecting their histories.

"Receiving the NSF GRFP amid our current political climate has given me an even greater sense of responsibility to pursue my research with full force,” Croskey said."

Read more in the UCSB College of Engineering Newsletter.

This year’s theme was “Myths and Legends”. Other artists receiving the award with Professor Kuchera-Morin were Mary Heebner, Gabriela Ruiz, Manjari Sharma, and Diana Thater.

The software creates personalized visuals and abstract art in an immersive landscape that is based on the memories of the crew members. The news articles highlight their work on a software pipeline that was being used at the St. Kliment Ohridski base on Livingston Island, Antarctica.

For more information, please see:

UCSB's The Current news magazine article:

New frontiers for well-being in Antarctica and isolated spaces.

Santa Barbara Independent article:

UC Santa Barbara Researchers Design Tools to Combat Isolation in Extreme Environments.

Iason Paterakis, Nefeli Manoudaki - AI driven visuals: Icescape

Iason Paterakis, Nefeli Manoudaki - AI driven visuals: Beach

Iason Paterakis, Nefeli Manoudaki - AI driven visuals: Plains

The title of the NSF award is Dynamic Control Systems for Manual-Computational Fabrication. Professor Jacobs was awarded the NSF Career Award to further her research in integrating skilled manual and material production with computational fabrication.

The CAREER Program offers the NSF's most prestigious awards in support of early-career faculty who have the potential to serve as academic role models in research and education and to lead advances in the mission of their department or organization.

Professor Jacobs thanks all of the amazing members the Expressive Computation Lab whose research contributed the intellectual foundations of this award.

UCSB News: Making Automation More Human Through Innovative Fabrication Tools

NSF link: Dynamic Control Systems for Manual-Computational Fabrication

|

|

|

|

|

|

Media Arts and Technology (MAT) at UCSB is a transdisciplinary graduate program that fuses emergent media, computer science, engineering, electronic music and digital art research, practice, production, and theory. Created by faculty in both the College of Engineering and the College of Letters and Science, MAT offers an unparalleled opportunity for working at the frontiers of art, science, and technology, where new art forms are born and new expressive media are invented.

In MAT, we seek to define and to create the future of media art and media technology. Our research explores the limits of what is possible in technologically sophisticated art and media, both from an artistic and an engineering viewpoint. Combining art, science, engineering, and theory, MAT graduate studies provide students with a combination of critical and technical tools that prepare them for leadership roles in artistic, engineering, production/direction, educational, and research contexts.

The program offers Master of Science and Ph.D. degrees in Media Arts and Technology. MAT students may focus on an area of emphasis (multimedia engineering, electronic music and sound design, or visual and spatial arts), but all students should strive to transcend traditional disciplinary boundaries and work with other students and faculty in collaborative, multidisciplinary research projects and courses.